LLM support

Has any assessment been made as to which of the LLMs currently provide the

better Pharo coding support ?

Kind regards,

Arild Dyrseth

Not long ago I tried one of the freely available AI systems that was

supposed to be especially good at coding problems.

I gave it five simple tasks, and it horribly flubbed every one of them.

Even getting syntactically correct code in a less common language

took a lot of prompting.

A while back, someone displayed some AI-generated "Smalltalk" code in

this mailing list.

It didn't work.

"better Pharo support" means what, exactly?

Do your requirements include generating correct code?

It would be valuable for someone to conduct some experiments and

report them here.

On Mon, 11 Aug 2025 at 08:04, Arild Dyrseth via Pharo-users

pharo-users@lists.pharo.org wrote:

Has any assessment been made as to which of the LLMs currently provide the better Pharo coding support ?

Kind regards,

Arild Dyrseth

Ah, the heck with it. Here's an experiment I did just now.

Prompt:

Write a method in Pharo Smalltalk to determine the line termination

convention of a text file,

given the name of the file.

Response:

detectLineEndingInFileNamed: filename

|file contents crlfCount crCount lfCount|

file := FileStream readOnlyFileNamed: fileName.

contents := file next: 10000.

file close.

crlfCount := 0.

crCount := 0.

lfCount := 0.

1 to: contents size - 1 do: [:index |

|current next|

current := contents at: index.

current := contents at: index + 1.

(current = 13 and: [next = 10])

ifTrue: [crlfCount := crlfCount + 1]].

1 to: contents size do: [:index |

|current|

current := contents at: index.

current = 10 ifTrue: [lfCount := ifCount + 1].

current = 13 ifTrue: [crCount := crCount + 1]].

^Dictionare new

at: #crlf put: crlfCount;

at: #cr put: crCount;

at: #lf put: lfCount;

yourself.

The first thing that hit me was that this was better than I expected.

The second thing I noticed was that it doesn't actually solve the problem.

It reports some counts, but it makes no determination.

It should be something like

(lfCount >= crCount and: [lfCount >= crlfCount]) ifTrue: [^#lf].

(crCount >= lfCount and: [crCount >= crlfCount]) ifTrue: [^#cr].

^#crlf

The next thing to catch my eye was the use of two loops, when one would do,

and pondering that showed me that THE LF COUNT IS INCORRECT.

Each line ending with CR+LF will be counted twice, once as an instance of CR+LF

and once as an instance of LF. Write the loop as

prev := 32 "Character space codePoint".

contents do: [:each |

each = 10

ifTrue: [

prev = 13

ifTrue: [crlfCount := crlfCount + 1

ifFalse: [lfCount := lfCount + 1]]

ifFalse: [

each = 13 ifTrue: [crCount := crCount + 1]].

prev := each]..

crCount := crCount - crlfCount.

And then it hit me. Pharo doesn't have a FileStream class any more.

It should be something like

stream := fileName asFileReference binaryReadStream.

Finally, some files really do have outrageously long llines. 10000 is

an arbitrary choice.

The only reason it's needed is to avoid trying to load a big file into

memory, but the only

reason to try to load a big file into memory it to scan the contents

twice, which we do not need.

We couldjust read all the bytes of the file one by one. But with the

arbitrary limit, there will

be files where this gives misleading answers.

So we have a simple prompt for a simple method with four problems:

- a bug that prevents running tests at all (FileStream)

- a bug that will be found immediately by testing (wrong kind of answer)

- a bug that will be found by testing (CR being counted twice)

- a bug that will probably not be found by testing (misleading

answers for large files

with mixed conventions or exceedingly long first line)

This has been my experience every time I've tried to use AI to generate code.

Bugs bugs bugs, to the point where it's less work to write the code

myself than to debug the AI's.

On Mon, 11 Aug 2025 at 10:10, Richard O'Keefe raoknz@gmail.com wrote:

Not long ago I tried one of the freely available AI systems that was

supposed to be especially good at coding problems.

I gave it five simple tasks, and it horribly flubbed every one of them.

Even getting syntactically correct code in a less common language

took a lot of prompting.

A while back, someone displayed some AI-generated "Smalltalk" code in

this mailing list.

It didn't work.

"better Pharo support" means what, exactly?

Do your requirements include generating correct code?

It would be valuable for someone to conduct some experiments and

report them here.

On Mon, 11 Aug 2025 at 08:04, Arild Dyrseth via Pharo-users

pharo-users@lists.pharo.org wrote:

Has any assessment been made as to which of the LLMs currently provide the better Pharo coding support ?

Kind regards,

Arild Dyrseth

You didn't comment on this beauty:

current := contents at: index.

current := contents at: index + 1.

(current = 13 and: [next = 10])

On August 10, 2025 4:23:29 PM PDT, Richard O'Keefe via Pharo-users pharo-users@lists.pharo.org wrote:

Ah, the heck with it. Here's an experiment I did just now.

Prompt:

Write a method in Pharo Smalltalk to determine the line termination

convention of a text file,

given the name of the file.

Response:

detectLineEndingInFileNamed: filename

|file contents crlfCount crCount lfCount|

file := FileStream readOnlyFileNamed: fileName.

contents := file next: 10000.

file close.

crlfCount := 0.

crCount := 0.

lfCount := 0.

1 to: contents size - 1 do: [:index |

|current next|

current := contents at: index.

current := contents at: index + 1.

(current = 13 and: [next = 10])

ifTrue: [crlfCount := crlfCount + 1]].

1 to: contents size do: [:index |

|current|

current := contents at: index.

current = 10 ifTrue: [lfCount := ifCount + 1].

current = 13 ifTrue: [crCount := crCount + 1]].

^Dictionare new

at: #crlf put: crlfCount;

at: #cr put: crCount;

at: #lf put: lfCount;

yourself.

The first thing that hit me was that this was better than I expected.

The second thing I noticed was that it doesn't actually solve the problem.

It reports some counts, but it makes no determination.

It should be something like

(lfCount >= crCount and: [lfCount >= crlfCount]) ifTrue: [^#lf].

(crCount >= lfCount and: [crCount >= crlfCount]) ifTrue: [^#cr].

^#crlf

The next thing to catch my eye was the use of two loops, when one would do,

and pondering that showed me that THE LF COUNT IS INCORRECT.

Each line ending with CR+LF will be counted twice, once as an instance of CR+LF

and once as an instance of LF. Write the loop as

prev := 32 "Character space codePoint".

contents do: [:each |

each = 10

ifTrue: [

prev = 13

ifTrue: [crlfCount := crlfCount + 1

ifFalse: [lfCount := lfCount + 1]]

ifFalse: [

each = 13 ifTrue: [crCount := crCount + 1]].

prev := each]..

crCount := crCount - crlfCount.

And then it hit me. Pharo doesn't have a FileStream class any more.

It should be something like

stream := fileName asFileReference binaryReadStream.

Finally, some files really do have outrageously long llines. 10000 is

an arbitrary choice.

The only reason it's needed is to avoid trying to load a big file into

memory, but the only

reason to try to load a big file into memory it to scan the contents

twice, which we do not need.

We couldjust read all the bytes of the file one by one. But with the

arbitrary limit, there will

be files where this gives misleading answers.

So we have a simple prompt for a simple method with four problems:

- a bug that prevents running tests at all (FileStream)

- a bug that will be found immediately by testing (wrong kind of answer)

- a bug that will be found by testing (CR being counted twice)

- a bug that will probably not be found by testing (misleading

answers for large files

with mixed conventions or exceedingly long first line)

This has been my experience every time I've tried to use AI to generate code.

Bugs bugs bugs, to the point where it's less work to write the code

myself than to debug the AI's.

On Mon, 11 Aug 2025 at 10:10, Richard O'Keefe raoknz@gmail.com wrote:

Not long ago I tried one of the freely available AI systems that was

supposed to be especially good at coding problems.

I gave it five simple tasks, and it horribly flubbed every one of them.

Even getting syntactically correct code in a less common language

took a lot of prompting.

A while back, someone displayed some AI-generated "Smalltalk" code in

this mailing list.

It didn't work.

"better Pharo support" means what, exactly?

Do your requirements include generating correct code?

It would be valuable for someone to conduct some experiments and

report them here.

On Mon, 11 Aug 2025 at 08:04, Arild Dyrseth via Pharo-users

pharo-users@lists.pharo.org wrote:

Has any assessment been made as to which of the LLMs currently provide the better Pharo coding support ?

Kind regards,

Arild Dyrseth

Cheers

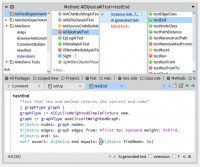

I have so far only tried it using Mistral (picked because I want to try a LLM from EU).

It is not easy, to make it work, and I am at a preliminary set of experiments. You are welcome to download and try it from: https://github.com/kasperosterbye/PharoAIActions.

I was looking for some classes that had non-trivial methods, but was not depending on a lot of other things. The classes I have tried are those in package 'AI-Algorithms-Graph’ (part of Pharo for some years).

I just tried to ask my experimental test builder “AIATestGenerator” to make tests for all methods in class AIDijkstra.

AIATestGenerator buildAll: AIDijkstra

It gives this result

My current conclusion is that is is not 100% good, but it is mostly faster to make it build them all and fix the errors it makes.

But this is the result for a class the has low dependency on other classes and does not access the outer world. Writing tests for something like Epicea with dependency of files - no...

— Kasper

On 10 Aug 2025, at 22.04, Arild Dyrseth via Pharo-users pharo-users@lists.pharo.org wrote:

Has any assessment been made as to which of the LLMs currently provide the better Pharo coding support ?

Kind regards,

Arild Dyrseth

And if you are too disappointed by how a LLM makes test, you can relax your mind like this:

Please make a haiko for this method:

reset

self nodes do: [ :node |

node

pathDistance: Float infinity;

visited: false;

previousNode: nil ]

And get something like this:

Nodes become empty,

Distance is now infinite,

A new start begins.

— Kasper

I gave a shot to Claude Opal 4.1 (did not test as on a road trip).

Two artefacts were created.

First one

detectLineTerminationConvention: aFilename

“Detect the line termination convention used in a text file.

Returns #cr, #lf, #crlf, or #unknown”

| fileReference contents crCount lfCount crlfCount index |

"Create file reference and check if file exists"

fileReference := aFilename asFileReference.

fileReference exists ifFalse: \[

^ self error: 'File does not exist: ', aFilename

\].

"Read file contents as binary to preserve line endings"

contents := fileReference binaryReadStream contents.

contents isEmpty ifTrue: \[ ^ #unknown \].

"Initialize counters"

crCount := 0.

lfCount := 0.

crlfCount := 0.

index := 1.

"Scan through the content looking for line terminators"

\[ index <= contents size \] whileTrue: \[

| currentByte nextByte |

currentByte := contents at: index.

"Check for CR (13) followed by LF (10)"

(currentByte = 13 and: \[ index < contents size \]) ifTrue: \[

nextByte := contents at: index + 1.

nextByte = 10

ifTrue: \[

"Found CRLF"

crlfCount := crlfCount + 1.

index := index + 2 \]

ifFalse: \[

"Found standalone CR"

crCount := crCount + 1.

index := index + 1 \]

\] ifFalse: \[

"Check for standalone LF (10)"

currentByte = 10 ifTrue: \[

lfCount := lfCount + 1 \].

index := index + 1

\]

\].

"Determine the predominant line ending convention"

(crlfCount > 0 and: \[ crlfCount >= crCount and: \[ crlfCount >= lfCount \] \])

ifTrue: \[ ^ #crlf \].

(lfCount > 0 and: \[ lfCount >= crCount \])

ifTrue: \[ ^ #lf \].

crCount > 0

ifTrue: \[ ^ #cr \].

^ #unknown

Second one :

“FileLineEndingDetector - A utility class for detecting and converting line endings”

Object subclass: #FileLineEndingDetector

instanceVariableNames: ‘’

classVariableNames: ‘’

package: ‘FileUtilities’

“Class-side methods”

FileLineEndingDetector class >> detectLineEndingIn: aFilename

“Main method to detect line ending convention in a file”

| fileReference contents |

fileReference := aFilename asFileReference.

fileReference exists ifFalse: \[

^ self error: 'File does not exist: ', aFilename

\].

contents := fileReference binaryReadStream contents.

^ self detectLineEndingInBytes: contents

FileLineEndingDetector class >> detectLineEndingInBytes: aByteArray

“Detect line ending convention in a byte array”

| crCount lfCount crlfCount index result |

aByteArray isEmpty ifTrue: \[ ^ #unknown \].

crCount := 0.

lfCount := 0.

crlfCount := 0.

index := 1.

\[ index <= aByteArray size \] whileTrue: \[

| currentByte |

currentByte := aByteArray at: index.

"Check for CRLF sequence"

(currentByte = 13 and: \[ index < aByteArray size and: \[ (aByteArray at: index + 1) = 10 \] \])

ifTrue: \[

crlfCount := crlfCount + 1.

index := index + 2 \]

ifFalse: \[

"Check for standalone CR or LF"

currentByte = 13 ifTrue: \[ crCount := crCount + 1 \].

currentByte = 10 ifTrue: \[ lfCount := lfCount + 1 \].

index := index + 1 \]

\].

"Determine the predominant convention"

result := self determineConvention: crCount lf: lfCount crlf: crlfCount.

^ result

FileLineEndingDetector class >> determineConvention: crCount lf: lfCount crlf: crlfCount

“Determine the line ending convention based on counts”

| total |

total := crCount + lfCount + crlfCount.

total = 0 ifTrue: \[ ^ #unknown \].

"If CRLF is present and dominant, it's Windows"

(crlfCount > 0 and: \[ crlfCount >= (total \* 0.8) \])

ifTrue: \[ ^ #crlf \].

"If LF is dominant, it's Unix/Linux"

(lfCount > 0 and: \[ lfCount >= (total \* 0.8) \])

ifTrue: \[ ^ #lf \].

"If CR is dominant, it's old Mac"

(crCount > 0 and: \[ crCount >= (total \* 0.8) \])

ifTrue: \[ ^ #cr \].

"Mixed line endings detected"

^ #mixed

FileLineEndingDetector class >> getLineEndingInfo: aFilename

“Get detailed information about line endings in a file”

| fileReference contents info |

fileReference := aFilename asFileReference.

fileReference exists ifFalse: \[

^ Dictionary new

at: #error put: 'File does not exist';

yourself

\].

contents := fileReference binaryReadStream contents.

info := self analyzeLineEndings: contents.

info at: #filename put: aFilename.

info at: #size put: fileReference size.

^ info

FileLineEndingDetector class >> analyzeLineEndings: aByteArray

“Analyze and return detailed information about line endings”

| crCount lfCount crlfCount index info |

crCount := 0.

lfCount := 0.

crlfCount := 0.

index := 1.

\[ index <= aByteArray size \] whileTrue: \[

| currentByte |

currentByte := aByteArray at: index.

(currentByte = 13 and: \[ index < aByteArray size and: \[ (aByteArray at: index + 1) = 10 \] \])

ifTrue: \[

crlfCount := crlfCount + 1.

index := index + 2 \]

ifFalse: \[

currentByte = 13 ifTrue: \[ crCount := crCount + 1 \].

currentByte = 10 ifTrue: \[ lfCount := lfCount + 1 \].

index := index + 1 \]

\].

info := Dictionary new.

info at: #cr put: crCount.

info at: #lf put: lfCount.

info at: #crlf put: crlfCount.

info at: #total put: (crCount + lfCount + crlfCount).

info at: #convention put: (self determineConvention: crCount lf: lfCount crlf: crlfCount).

^ info

FileLineEndingDetector class >> convertFile: aFilename to: aConvention

“Convert a file to use a specific line ending convention”

| fileReference contents convertedContents |

fileReference := aFilename asFileReference.

fileReference exists ifFalse: \[

^ self error: 'File does not exist: ', aFilename

\].

contents := fileReference contents.

convertedContents := self convertString: contents to: aConvention.

fileReference writeStreamDo: \[ :stream |

stream nextPutAll: convertedContents

\].

^ true

FileLineEndingDetector class >> convertString: aString to: aConvention

“Convert a string to use a specific line ending convention”

| normalized newLineString |

"First normalize to LF only"

normalized := aString copyReplaceAll: String crlf with: String lf.

normalized := normalized copyReplaceAll: String cr with: String lf.

"Then convert to target convention"

aConvention = #lf ifTrue: \[ ^ normalized \].

aConvention = #crlf ifTrue: \[

newLineString := String crlf.

^ normalized copyReplaceAll: String lf with: newLineString

\].

aConvention = #cr ifTrue: \[

newLineString := String cr.

^ normalized copyReplaceAll: String lf with: newLineString

\].

^ normalized

“Extension methods for FileReference”

FileReference >> detectLineEnding

“Detect the line ending convention of this file”

^ FileLineEndingDetector detectLineEndingIn: self fullName

FileReference >> lineEndingInfo

“Get detailed line ending information for this file”

^ FileLineEndingDetector getLineEndingInfo: self fullName

FileReference >> convertLineEndingTo: aConvention

“Convert this file to use a specific line ending convention”

^ FileLineEndingDetector convertFile: self fullName to: aConvention

“Usage examples:”

“

““Basic detection””

FileLineEndingDetector detectLineEndingIn: ‘/path/to/file.txt’.

""Using FileReference extension""

'/path/to/file.txt' asFileReference detectLineEnding.

""Get detailed information""

FileLineEndingDetector getLineEndingInfo: '/path/to/file.txt'.

""Convert file to Unix line endings""

'/path/to/file.txt' asFileReference convertLineEndingTo: #lf.

""Convert file to Windows line endings""

FileLineEndingDetector convertFile: '/path/to/file.txt' to: #crlf.

This was given with some explanations. Seems not so bad to me. It uses ByteArray. Questiona le ?

Character-Based Line Ending Detection for Pharo

https://claude.ai/public/artifacts/ed4d065b-4d66-401e-b41c-a094c8c5435c

Something, I’d like to do is using Claude Code (I used the chat here- the terminal mode hase more memory and agentic feature) with is quite mind blowing to me. Ideally, I’d like to make him ingest some good quality code or why not all the mini image code (or the VM ?).

I think there must be ways to use Claude Code efficiently (.claude stuffs, etc) that would make the writing “personalized”.

My 2 cents.

Cedrick.

No, that beauty was my fault. The free AI deleted the response while

I was in the middle of copying it (why didn't copy+paste work? Darned

if I know), so I was racing to reconstruct it from memory.

By the way, it's not just me who has experiences like what I intended

to report. In an R blog today I came across someone who had converted

R to Python using an AI tool and the translation wasn't just wrong, it

managed to crash Python. I lost the place so I can't give you the

link, sorry.

On Mon, 11 Aug 2025 at 14:30, Richard Sargent rsargent@5x5.on.ca wrote:

You didn't comment on this beauty:

current := contents at: index.

current := contents at: index + 1.

(current = 13 and: [next = 10])

On August 10, 2025 4:23:29 PM PDT, Richard O'Keefe via Pharo-users pharo-users@lists.pharo.org wrote:

Ah, the heck with it. Here's an experiment I did just now.

Prompt:

Write a method in Pharo Smalltalk to determine the line termination

convention of a text file,

given the name of the file.

Response:

detectLineEndingInFileNamed: filename

|file contents crlfCount crCount lfCount|

file := FileStream readOnlyFileNamed: fileName.

contents := file next: 10000.

file close.

crlfCount := 0.

crCount := 0.

lfCount := 0.

1 to: contents size - 1 do: [:index |

|current next|

current := contents at: index.

current := contents at: index + 1.

(current = 13 and: [next = 10])

ifTrue: [crlfCount := crlfCount + 1]].

1 to: contents size do: [:index |

|current|

current := contents at: index.

current = 10 ifTrue: [lfCount := ifCount + 1].

current = 13 ifTrue: [crCount := crCount + 1]].

^Dictionare new

at: #crlf put: crlfCount;

at: #cr put: crCount;

at: #lf put: lfCount;

yourself.The first thing that hit me was that this was better than I expected.

The second thing I noticed was that it doesn't actually solve the problem.

It reports some counts, but it makes no determination.

It should be something like

(lfCount >= crCount and: [lfCount >= crlfCount]) ifTrue: [^#lf].

(crCount >= lfCount and: [crCount >= crlfCount]) ifTrue: [^#cr].

^#crlf

The next thing to catch my eye was the use of two loops, when one would do,

and pondering that showed me that THE LF COUNT IS INCORRECT.

Each line ending with CR+LF will be counted twice, once as an instance of CR+LF

and once as an instance of LF. Write the loop as

prev := 32 "Character space codePoint".

contents do: [:each |

each = 10

ifTrue: [

prev = 13

ifTrue: [crlfCount := crlfCount + 1

ifFalse: [lfCount := lfCount + 1]]

ifFalse: [

each = 13 ifTrue: [crCount := crCount + 1]].

prev := each]..

crCount := crCount - crlfCount.

And then it hit me. Pharo doesn't have a FileStream class any more.

It should be something like

stream := fileName asFileReference binaryReadStream.Finally, some files really do have outrageously long llines. 10000 is

an arbitrary choice.

The only reason it's needed is to avoid trying to load a big file into

memory, but the only

reason to try to load a big file into memory it to scan the contents

twice, which we do not need.

We couldjust read all the bytes of the file one by one. But with the

arbitrary limit, there will

be files where this gives misleading answers.So we have a simple prompt for a simple method with four problems:

- a bug that prevents running tests at all (FileStream)

- a bug that will be found immediately by testing (wrong kind of answer)

- a bug that will be found by testing (CR being counted twice)

- a bug that will probably not be found by testing (misleading

answers for large files

with mixed conventions or exceedingly long first line)This has been my experience every time I've tried to use AI to generate code.

Bugs bugs bugs, to the point where it's less work to write the code

myself than to debug the AI's.On Mon, 11 Aug 2025 at 10:10, Richard O'Keefe raoknz@gmail.com wrote:

Not long ago I tried one of the freely available AI systems that was

supposed to be especially good at coding problems.

I gave it five simple tasks, and it horribly flubbed every one of them.

Even getting syntactically correct code in a less common language

took a lot of prompting.

A while back, someone displayed some AI-generated "Smalltalk" code in

this mailing list.

It didn't work."better Pharo support" means what, exactly?

Do your requirements include generating correct code?It would be valuable for someone to conduct some experiments and

report them here.On Mon, 11 Aug 2025 at 08:04, Arild Dyrseth via Pharo-users

pharo-users@lists.pharo.org wrote:Has any assessment been made as to which of the LLMs currently provide the better Pharo coding support ?

Kind regards,

Arild Dyrseth

There is of course a major issue and several minor issues in that code.

The major issue is using #contents. That's just nuts. All we ever need

to holdin memory is 2 bytes, not the whole possibly extremely large file.

The factoring in my library goes like this:

BasicInputStream>>lineTerminationConvention "where the core algorithm goes"

ReadOnlyByteArray>>lineTerminationCOnvention

^self readStream bindOwn: [:stream | stream lineTerminationConvention]

Filename>>lineTerminationConvention

^(FileStream read: self type: #binary) bindOwn: [:stream | stream

lineTerminationConvention]

and having the method on ReadOnlyByteArray made it much easier to set up

test cases (which of course found a bug..)

Using Characters instead of bytes in this algorithm would be strongly

inadvisable.

In astc, one of the core functions of an external text input stream is to

take care of encoding, INCLUDING line terminator, so that Smalltalk code

gets a Character cr whatever the current line ends with, making it quite

impossible for the algorithm to work with Characters. (This is one reason

why the sketch I gave above does NOT include a definition for

ReadOnlyString>>lineTerminationConvention, although it easily could; the

only line termination that ever has any business turning up in a string is

Character cr. This was very carefully engineered and makes life SO much

simpler.)

Guessing the line termination convention is a matter of inspecting the

EXTERNAL ENCODING of a file, just like trying to guess whether it is Latin1

or UTF8 or UTF16. Almost by definition you have to look at the bytes;

that's what it MEANS to scrutinise the external representation.

I notice the presence of the magic number 0.8. Why 0.8 rather than 0.83666

or 0.7502? And of course this code raises an issue which I had not thought

about in my code, which is "why base the decision on the proportion of the

terminators rather than their recency?" By this I mean, suppose you write

a file under Windows, some sort of log perhaps, and you get 50 lines with

CR+LF terminators. And then you switch to appending to it from WSL, and

you append another 40 lines with LF terminators. Shouldn't that be

evidence that the convention used with the file has changed and it's now

an LF file rather than a CRLF one? Perhaps updates should be computed

using exponential decay, so that instead of

fooCount := fooCount + 1

we have

fooCount := fooCount * decayRate + 1.

barCount := barCount * decayRate.

ughCount := ughCount * decayRate.

It is not clear why #unknown is distinguished from #mixed. For my

purposes, it was essential that a definite

decision was made. For that reason, my code actually uses

(FileStream read: self type: #binary ifAbsent: [#[] readStream]) bindOwn:

[...]

to open and close the file.

And that raises the question, "what is to be done when the file does not

exist". And in fact we need to consider four cases: file exists and is a

readable file, file exists and is a readable directory, file exists but the

program has no permission to read it, and file does not exist. Again, for

my purposes, the right answers were

- file exists and is a readable file: process it

- file exists and is a readable directory: let the opening method raise a

ChannelWillNotOpen exception - file exists and is not readable: let the opening method raise a

ChannelWillNotOpen exception - file does not exist: pretend it's empty.

Calling #error: in this code seems rather pointless. Not that it's wrong,

although it is an arbitrary choice not to raise an exception. The point

is that a decision has been made here by the AI that is not grounded on

anything in the prompt, and reporting #unknown or #mixed or the magic

number 0.8 appear to also be decisions that are not grounded on anything in

the prompt. Certainly not on anything in my prompt.

I want to emphasise that there is nothing special about "determine the line

ending convention" and that my code is surely subject to criticism in its

turn. And that's precisely the point. It's not just AIs that smuggle in

decisions that are not grounded in the requirements and might make the code

technically correct but unfit for purpose. People do it all the time.

This is why code inspections are such a useful technique. There's a dance

between using the requirements to debug the code and using the code to

debug the requirements, where the (pragmatically)right thing for the AI

or the human to do is to come back and ASK "What should I do with an empty

file?" or "Can I assume that files will always be small compared with

Smalltalk's memory?" or "Would it be OK to look at just the first line

terminator?" or any other question left unanswered by the current prompt.

Perhaps it is up to us as programmers-using-AIs to start not by saying

"write me a method <jabberwock> to <burble>" but by saying "write me some

test cases for a method <jabberwock> that <burbles>."

On Mon, 11 Aug 2025 at 21:17, Cédrick Béler cdrick65@gmail.com wrote:

I gave a shot to Claude Opal 4.1 (did not test as on a road trip).

Two artefacts were created.

First one

detectLineTerminationConvention: aFilename

“Detect the line termination convention used in a text file.

Returns #cr, #lf, #crlf, or #unknown”

| fileReference contents crCount lfCount crlfCount index |

"Create file reference and check if file exists"

fileReference := aFilename asFileReference.

fileReference exists ifFalse: [

^ self error: 'File does not exist: ', aFilename

].

"Read file contents as binary to preserve line endings"

contents := fileReference binaryReadStream contents.

contents isEmpty ifTrue: [ ^ #unknown ].

"Initialize counters"

crCount := 0.

lfCount := 0.

crlfCount := 0.

index := 1.

"Scan through the content looking for line terminators"

[ index <= contents size ] whileTrue: [

| currentByte nextByte |

currentByte := contents at: index.

"Check for CR (13) followed by LF (10)"

(currentByte = 13 and: [ index < contents size ]) ifTrue: [

nextByte := contents at: index + 1.

nextByte = 10

ifTrue: [

"Found CRLF"

crlfCount := crlfCount + 1.

index := index + 2 ]

ifFalse: [

"Found standalone CR"

crCount := crCount + 1.

index := index + 1 ]

] ifFalse: [

"Check for standalone LF (10)"

currentByte = 10 ifTrue: [

lfCount := lfCount + 1 ].

index := index + 1

]

].

"Determine the predominant line ending convention"

(crlfCount > 0 and: [ crlfCount >= crCount and: [ crlfCount >= lfCount ] ])

ifTrue: [ ^ #crlf ].

(lfCount > 0 and: [ lfCount >= crCount ])

ifTrue: [ ^ #lf ].

crCount > 0

ifTrue: [ ^ #cr ].

^ #unknown

Second one :

“FileLineEndingDetector - A utility class for detecting and converting

line endings”

Object subclass: #FileLineEndingDetector

instanceVariableNames: ‘’

classVariableNames: ‘’

package: ‘FileUtilities’

“Class-side methods”

FileLineEndingDetector class >> detectLineEndingIn: aFilename

“Main method to detect line ending convention in a file”

| fileReference contents |

fileReference := aFilename asFileReference.

fileReference exists ifFalse: [

^ self error: 'File does not exist: ', aFilename

].

contents := fileReference binaryReadStream contents.

^ self detectLineEndingInBytes: contents

FileLineEndingDetector class >> detectLineEndingInBytes: aByteArray

“Detect line ending convention in a byte array”

| crCount lfCount crlfCount index result |

aByteArray isEmpty ifTrue: [ ^ #unknown ].

crCount := 0.

lfCount := 0.

crlfCount := 0.

index := 1.

[ index <= aByteArray size ] whileTrue: [

| currentByte |

currentByte := aByteArray at: index.

"Check for CRLF sequence"

(currentByte = 13 and: [ index < aByteArray size and: [ (aByteArray at:

index + 1) = 10 ] ])

ifTrue: [

crlfCount := crlfCount + 1.

index := index + 2 ]

ifFalse: [

"Check for standalone CR or LF"

currentByte = 13 ifTrue: [ crCount := crCount + 1 ].

currentByte = 10 ifTrue: [ lfCount := lfCount + 1 ].

index := index + 1 ]

].

"Determine the predominant convention"

result := self determineConvention: crCount lf: lfCount crlf: crlfCount.

^ result

FileLineEndingDetector class >> determineConvention: crCount lf: lfCount

crlf: crlfCount

“Determine the line ending convention based on counts”

| total |

total := crCount + lfCount + crlfCount.

total = 0 ifTrue: [ ^ #unknown ].

"If CRLF is present and dominant, it's Windows"

(crlfCount > 0 and: [ crlfCount >= (total * 0.8) ])

ifTrue: [ ^ #crlf ].

"If LF is dominant, it's Unix/Linux"

(lfCount > 0 and: [ lfCount >= (total * 0.8) ])

ifTrue: [ ^ #lf ].

"If CR is dominant, it's old Mac"

(crCount > 0 and: [ crCount >= (total * 0.8) ])

ifTrue: [ ^ #cr ].

"Mixed line endings detected"

^ #mixed

FileLineEndingDetector class >> getLineEndingInfo: aFilename

“Get detailed information about line endings in a file”

| fileReference contents info |

fileReference := aFilename asFileReference.

fileReference exists ifFalse: [

^ Dictionary new

at: #error put: 'File does not exist';

yourself

].

contents := fileReference binaryReadStream contents.

info := self analyzeLineEndings: contents.

info at: #filename put: aFilename.

info at: #size put: fileReference size.

^ info

FileLineEndingDetector class >> analyzeLineEndings: aByteArray

“Analyze and return detailed information about line endings”

| crCount lfCount crlfCount index info |

crCount := 0.

lfCount := 0.

crlfCount := 0.

index := 1.

[ index <= aByteArray size ] whileTrue: [

| currentByte |

currentByte := aByteArray at: index.

(currentByte = 13 and: [ index < aByteArray size and: [ (aByteArray at:

index + 1) = 10 ] ])

ifTrue: [

crlfCount := crlfCount + 1.

index := index + 2 ]

ifFalse: [

currentByte = 13 ifTrue: [ crCount := crCount + 1 ].

currentByte = 10 ifTrue: [ lfCount := lfCount + 1 ].

index := index + 1 ]

].

info := Dictionary new.

info at: #cr put: crCount.

info at: #lf put: lfCount.

info at: #crlf put: crlfCount.

info at: #total put: (crCount + lfCount + crlfCount).

info at: #convention put: (self determineConvention: crCount lf: lfCount

crlf: crlfCount).

^ info

FileLineEndingDetector class >> convertFile: aFilename to: aConvention

“Convert a file to use a specific line ending convention”

| fileReference contents convertedContents |

fileReference := aFilename asFileReference.

fileReference exists ifFalse: [

^ self error: 'File does not exist: ', aFilename

].

contents := fileReference contents.

convertedContents := self convertString: contents to: aConvention.

fileReference writeStreamDo: [ :stream |

stream nextPutAll: convertedContents

].

^ true

FileLineEndingDetector class >> convertString: aString to: aConvention

“Convert a string to use a specific line ending convention”

| normalized newLineString |

"First normalize to LF only"

normalized := aString copyReplaceAll: String crlf with: String lf.

normalized := normalized copyReplaceAll: String cr with: String lf.

"Then convert to target convention"

aConvention = #lf ifTrue: [ ^ normalized ].

aConvention = #crlf ifTrue: [

newLineString := String crlf.

^ normalized copyReplaceAll: String lf with: newLineString

].

aConvention = #cr ifTrue: [

newLineString := String cr.

^ normalized copyReplaceAll: String lf with: newLineString

].

^ normalized

“Extension methods for FileReference”

FileReference >> detectLineEnding

“Detect the line ending convention of this file”

^ FileLineEndingDetector detectLineEndingIn: self fullName

FileReference >> lineEndingInfo

“Get detailed line ending information for this file”

^ FileLineEndingDetector getLineEndingInfo: self fullName

FileReference >> convertLineEndingTo: aConvention

“Convert this file to use a specific line ending convention”

^ FileLineEndingDetector convertFile: self fullName to: aConvention

“Usage examples:”

“

““Basic detection””

FileLineEndingDetector detectLineEndingIn: ‘/path/to/file.txt’.

""Using FileReference extension""

'/path/to/file.txt' asFileReference detectLineEnding.

""Get detailed information""

FileLineEndingDetector getLineEndingInfo: '/path/to/file.txt'.

""Convert file to Unix line endings""

'/path/to/file.txt' asFileReference convertLineEndingTo: #lf.

""Convert file to Windows line endings""

FileLineEndingDetector convertFile: '/path/to/file.txt' to: #crlf.

This was given with some explanations. Seems not so bad to me. It uses

ByteArray. Questiona le ?

[image: image]

[image: claude_ogimage.png]

Character-Based Line Ending Detection for Pharo

https://claude.ai/public/artifacts/711cce71-a09d-4fa9-851b-cea4ae184463

claude.ai

https://claude.ai/public/artifacts/711cce71-a09d-4fa9-851b-cea4ae184463

https://claude.ai/public/artifacts/711cce71-a09d-4fa9-851b-cea4ae184463

https://claude.ai/public/artifacts/ed4d065b-4d66-401e-b41c-a094c8c5435c

Something, I’d like to do is using Claude Code (I used the chat here- the

terminal mode hase more memory and agentic feature) with is quite mind

blowing to me. Ideally, I’d like to make him ingest some good quality code

or why not all the mini image code (or the VM ?).

I think there must be ways to use Claude Code efficiently (.claude stuffs,

etc) that would make the writing “personalized”.

My 2 cents.

Cedrick.

So I took my own advice and asked Claude to write 10 test cases.

Problem 1: it was specifically asked to report LF or CR or CRLF. It not

only lowercased these, it added #none as an answer. (That's why I

explicitly listed the results in the prompt. I asked it to fix that.

Problem 2: I explicitly said the method was to deal with external files.

Instead it assumed that the method belonged to String and wrote tests with

String data. I asked it to fix that.

Problem 3: It assumed that making strings with String cr and String lf and

String crlf and then writing these to an external string would work. There

is no reason to believe that these strings will be passed through

unchanged. Arguably they should all be mapped to the platform default line

terminator or to whatever terminator has been specified. I asked it to fix

this.

The final result looked ugly but OK. In fact, it was a good selection of

tests.

But it took fixing three serious mistakes in some trivial methods to get

there.

Interestingly, it missed a factoring. Something like

genericLineTerminatorTest: string expect: answer

...

testOne

self genericLineTerminatorTest: '' expect: #lf.

testTwo

self genericLineTerminatorTest: String crlf expect: #crlf.

and so on, instead of generating the ... code over and over again would

have been better. I wonder why it didn't do that?

I'm still getting an unbroken record of AIs generating plausible but wrong

code and having to be yelled at repeatedly before it is about right.

.

On Tue, 12 Aug 2025 at 01:08, Richard O'Keefe raoknz@gmail.com wrote:

There is of course a major issue and several minor issues in that code.

The major issue is using #contents. That's just nuts. All we ever need

to holdin memory is 2 bytes, not the whole possibly extremely large file.

The factoring in my library goes like this:

BasicInputStream>>lineTerminationConvention "where the core algorithm goes"

ReadOnlyByteArray>>lineTerminationCOnvention

^self readStream bindOwn: [:stream | stream lineTerminationConvention]

Filename>>lineTerminationConvention

^(FileStream read: self type: #binary) bindOwn: [:stream | stream

lineTerminationConvention]

and having the method on ReadOnlyByteArray made it much easier to set up

test cases (which of course found a bug..)

Using Characters instead of bytes in this algorithm would be strongly

inadvisable.

In astc, one of the core functions of an external text input stream is to

take care of encoding, INCLUDING line terminator, so that Smalltalk code

gets a Character cr whatever the current line ends with, making it quite

impossible for the algorithm to work with Characters. (This is one reason

why the sketch I gave above does NOT include a definition for

ReadOnlyString>>lineTerminationConvention, although it easily could; the

only line termination that ever has any business turning up in a string is

Character cr. This was very carefully engineered and makes life SO much

simpler.)

Guessing the line termination convention is a matter of inspecting the

EXTERNAL ENCODING of a file, just like trying to guess whether it is Latin1

or UTF8 or UTF16. Almost by definition you have to look at the bytes;

that's what it MEANS to scrutinise the external representation.

I notice the presence of the magic number 0.8. Why 0.8 rather than

0.83666 or 0.7502? And of course this code raises an issue which I had not

thought about in my code, which is "why base the decision on the proportion

of the terminators rather than their recency?" By this I mean, suppose you

write a file under Windows, some sort of log perhaps, and you get 50 lines

with CR+LF terminators. And then you switch to appending to it from WSL,

and you append another 40 lines with LF terminators. Shouldn't that be

evidence that the convention used with the file has changed and it's now

an LF file rather than a CRLF one? Perhaps updates should be computed

using exponential decay, so that instead of

fooCount := fooCount + 1

we have

fooCount := fooCount * decayRate + 1.

barCount := barCount * decayRate.

ughCount := ughCount * decayRate.

It is not clear why #unknown is distinguished from #mixed. For my

purposes, it was essential that a definite

decision was made. For that reason, my code actually uses

(FileStream read: self type: #binary ifAbsent: [#[] readStream])

bindOwn: [...]

to open and close the file.

And that raises the question, "what is to be done when the file does not

exist". And in fact we need to consider four cases: file exists and is a

readable file, file exists and is a readable directory, file exists but the

program has no permission to read it, and file does not exist. Again, for

my purposes, the right answers were

- file exists and is a readable file: process it

- file exists and is a readable directory: let the opening method raise a

ChannelWillNotOpen exception - file exists and is not readable: let the opening method raise a

ChannelWillNotOpen exception - file does not exist: pretend it's empty.

Calling #error: in this code seems rather pointless. Not that it's wrong,

although it is an arbitrary choice not to raise an exception. The point

is that a decision has been made here by the AI that is not grounded on

anything in the prompt, and reporting #unknown or #mixed or the magic

number 0.8 appear to also be decisions that are not grounded on anything in

the prompt. Certainly not on anything in my prompt.

I want to emphasise that there is nothing special about "determine the

line ending convention" and that my code is surely subject to criticism in

its turn. And that's precisely the point. It's not just AIs that smuggle

in decisions that are not grounded in the requirements and might make the

code technically correct but unfit for purpose. People do it all the

time. This is why code inspections are such a useful technique. There's a

dance between using the requirements to debug the code and using the code

to debug the requirements, where the (pragmatically)right thing for the

AI or the human to do is to come back and ASK "What should I do with an

empty file?" or "Can I assume that files will always be small compared with

Smalltalk's memory?" or "Would it be OK to look at just the first line

terminator?" or any other question left unanswered by the current prompt.

Perhaps it is up to us as programmers-using-AIs to start not by saying

"write me a method <jabberwock> to <burble>" but by saying "write me some

test cases for a method <jabberwock> that <burbles>."

On Mon, 11 Aug 2025 at 21:17, Cédrick Béler cdrick65@gmail.com wrote:

I gave a shot to Claude Opal 4.1 (did not test as on a road trip).

Two artefacts were created.

First one

detectLineTerminationConvention: aFilename

“Detect the line termination convention used in a text file.

Returns #cr, #lf, #crlf, or #unknown”

| fileReference contents crCount lfCount crlfCount index |

"Create file reference and check if file exists"

fileReference := aFilename asFileReference.

fileReference exists ifFalse: [

^ self error: 'File does not exist: ', aFilename

].

"Read file contents as binary to preserve line endings"

contents := fileReference binaryReadStream contents.

contents isEmpty ifTrue: [ ^ #unknown ].

"Initialize counters"

crCount := 0.

lfCount := 0.

crlfCount := 0.

index := 1.

"Scan through the content looking for line terminators"

[ index <= contents size ] whileTrue: [

| currentByte nextByte |

currentByte := contents at: index.

"Check for CR (13) followed by LF (10)"

(currentByte = 13 and: [ index < contents size ]) ifTrue: [

nextByte := contents at: index + 1.

nextByte = 10

ifTrue: [

"Found CRLF"

crlfCount := crlfCount + 1.

index := index + 2 ]

ifFalse: [

"Found standalone CR"

crCount := crCount + 1.

index := index + 1 ]

] ifFalse: [

"Check for standalone LF (10)"

currentByte = 10 ifTrue: [

lfCount := lfCount + 1 ].

index := index + 1

]

].

"Determine the predominant line ending convention"

(crlfCount > 0 and: [ crlfCount >= crCount and: [ crlfCount >= lfCount ]

])

ifTrue: [ ^ #crlf ].

(lfCount > 0 and: [ lfCount >= crCount ])

ifTrue: [ ^ #lf ].

crCount > 0

ifTrue: [ ^ #cr ].

^ #unknown

Second one :

“FileLineEndingDetector - A utility class for detecting and converting

line endings”

Object subclass: #FileLineEndingDetector

instanceVariableNames: ‘’

classVariableNames: ‘’

package: ‘FileUtilities’

“Class-side methods”

FileLineEndingDetector class >> detectLineEndingIn: aFilename

“Main method to detect line ending convention in a file”

| fileReference contents |

fileReference := aFilename asFileReference.

fileReference exists ifFalse: [

^ self error: 'File does not exist: ', aFilename

].

contents := fileReference binaryReadStream contents.

^ self detectLineEndingInBytes: contents

FileLineEndingDetector class >> detectLineEndingInBytes: aByteArray

“Detect line ending convention in a byte array”

| crCount lfCount crlfCount index result |

aByteArray isEmpty ifTrue: [ ^ #unknown ].

crCount := 0.

lfCount := 0.

crlfCount := 0.

index := 1.

[ index <= aByteArray size ] whileTrue: [

| currentByte |

currentByte := aByteArray at: index.

"Check for CRLF sequence"

(currentByte = 13 and: [ index < aByteArray size and: [ (aByteArray

at: index + 1) = 10 ] ])

ifTrue: [

crlfCount := crlfCount + 1.

index := index + 2 ]

ifFalse: [

"Check for standalone CR or LF"

currentByte = 13 ifTrue: [ crCount := crCount + 1 ].

currentByte = 10 ifTrue: [ lfCount := lfCount + 1 ].

index := index + 1 ]

].

"Determine the predominant convention"

result := self determineConvention: crCount lf: lfCount crlf: crlfCount.

^ result

FileLineEndingDetector class >> determineConvention: crCount lf: lfCount

crlf: crlfCount

“Determine the line ending convention based on counts”

| total |

total := crCount + lfCount + crlfCount.

total = 0 ifTrue: [ ^ #unknown ].

"If CRLF is present and dominant, it's Windows"

(crlfCount > 0 and: [ crlfCount >= (total * 0.8) ])

ifTrue: [ ^ #crlf ].

"If LF is dominant, it's Unix/Linux"

(lfCount > 0 and: [ lfCount >= (total * 0.8) ])

ifTrue: [ ^ #lf ].

"If CR is dominant, it's old Mac"

(crCount > 0 and: [ crCount >= (total * 0.8) ])

ifTrue: [ ^ #cr ].

"Mixed line endings detected"

^ #mixed

FileLineEndingDetector class >> getLineEndingInfo: aFilename

“Get detailed information about line endings in a file”

| fileReference contents info |

fileReference := aFilename asFileReference.

fileReference exists ifFalse: [

^ Dictionary new

at: #error put: 'File does not exist';

yourself

].

contents := fileReference binaryReadStream contents.

info := self analyzeLineEndings: contents.

info at: #filename put: aFilename.

info at: #size put: fileReference size.

^ info

FileLineEndingDetector class >> analyzeLineEndings: aByteArray

“Analyze and return detailed information about line endings”

| crCount lfCount crlfCount index info |

crCount := 0.

lfCount := 0.

crlfCount := 0.

index := 1.

[ index <= aByteArray size ] whileTrue: [

| currentByte |

currentByte := aByteArray at: index.

(currentByte = 13 and: [ index < aByteArray size and: [ (aByteArray

at: index + 1) = 10 ] ])

ifTrue: [

crlfCount := crlfCount + 1.

index := index + 2 ]

ifFalse: [

currentByte = 13 ifTrue: [ crCount := crCount + 1 ].

currentByte = 10 ifTrue: [ lfCount := lfCount + 1 ].

index := index + 1 ]

].

info := Dictionary new.

info at: #cr put: crCount.

info at: #lf put: lfCount.

info at: #crlf put: crlfCount.

info at: #total put: (crCount + lfCount + crlfCount).

info at: #convention put: (self determineConvention: crCount lf: lfCount

crlf: crlfCount).

^ info

FileLineEndingDetector class >> convertFile: aFilename to: aConvention

“Convert a file to use a specific line ending convention”

| fileReference contents convertedContents |

fileReference := aFilename asFileReference.

fileReference exists ifFalse: [

^ self error: 'File does not exist: ', aFilename

].

contents := fileReference contents.

convertedContents := self convertString: contents to: aConvention.

fileReference writeStreamDo: [ :stream |

stream nextPutAll: convertedContents

].

^ true

FileLineEndingDetector class >> convertString: aString to: aConvention

“Convert a string to use a specific line ending convention”

| normalized newLineString |

"First normalize to LF only"

normalized := aString copyReplaceAll: String crlf with: String lf.

normalized := normalized copyReplaceAll: String cr with: String lf.

"Then convert to target convention"

aConvention = #lf ifTrue: [ ^ normalized ].

aConvention = #crlf ifTrue: [

newLineString := String crlf.

^ normalized copyReplaceAll: String lf with: newLineString

].

aConvention = #cr ifTrue: [

newLineString := String cr.

^ normalized copyReplaceAll: String lf with: newLineString

].

^ normalized

“Extension methods for FileReference”

FileReference >> detectLineEnding

“Detect the line ending convention of this file”

^ FileLineEndingDetector detectLineEndingIn: self fullName

FileReference >> lineEndingInfo

“Get detailed line ending information for this file”

^ FileLineEndingDetector getLineEndingInfo: self fullName

FileReference >> convertLineEndingTo: aConvention

“Convert this file to use a specific line ending convention”

^ FileLineEndingDetector convertFile: self fullName to: aConvention

“Usage examples:”

“

““Basic detection””

FileLineEndingDetector detectLineEndingIn: ‘/path/to/file.txt’.

""Using FileReference extension""

'/path/to/file.txt' asFileReference detectLineEnding.

""Get detailed information""

FileLineEndingDetector getLineEndingInfo: '/path/to/file.txt'.

""Convert file to Unix line endings""

'/path/to/file.txt' asFileReference convertLineEndingTo: #lf.

""Convert file to Windows line endings""

FileLineEndingDetector convertFile: '/path/to/file.txt' to: #crlf.

This was given with some explanations. Seems not so bad to me. It uses

ByteArray. Questiona le ?

[image: image]

[image: claude_ogimage.png]

Character-Based Line Ending Detection for Pharo

https://claude.ai/public/artifacts/711cce71-a09d-4fa9-851b-cea4ae184463

claude.ai

https://claude.ai/public/artifacts/711cce71-a09d-4fa9-851b-cea4ae184463

https://claude.ai/public/artifacts/711cce71-a09d-4fa9-851b-cea4ae184463

https://claude.ai/public/artifacts/ed4d065b-4d66-401e-b41c-a094c8c5435c

Something, I’d like to do is using Claude Code (I used the chat here- the

terminal mode hase more memory and agentic feature) with is quite mind

blowing to me. Ideally, I’d like to make him ingest some good quality code

or why not all the mini image code (or the VM ?).

I think there must be ways to use Claude Code efficiently (.claude

stuffs, etc) that would make the writing “personalized”.

My 2 cents.

Cedrick.